I was cutting a short documentary piece about a family‑run bookstore that closed after forty years. The director wanted music that felt like “the quiet sadness of a last goodbye, but with a thread of gratitude running through it.” I tried that prompt on five different AI music platforms, and the results ranged from melodramatic soap opera strings to a cheerful ukulele track that sounded like a vacation commercial. The experience made me realize that while many tools claim to understand emotional prompts, few are tested for the kind of nuanced feeling that human storytellers actually need. So I designed a focused emotional accuracy test across six platforms—ToMusic AI, Suno, Udio, Soundraw, Mubert, and Beatoven—to see which AI Music Generator could best translate complex emotional language into sound, and which ones defaulted to the nearest cliché.

Emotional accuracy in AI music is harder to measure than frequency response or stereo width, but it matters enormously for the kinds of projects that make up the bulk of real creative work: documentaries, brand films, narrative podcasts, memorial videos, and cause‑driven campaigns. I developed a set of ten emotional briefs, each combining two or three feelings that don’t naturally sit together: “wistful but determined,” “tense but warm underneath,” “lonely but with a sense of wonder,” and the aforementioned “quiet sadness with gratitude.” I avoided single‑emotion prompts like “happy” or “scary,” because those rarely reflect the tone of actual media projects. Each platform received the same ten prompts, and I evaluated the outputs not on production polish, but on whether the music actually felt like the described emotion to me and to two other listeners I brought in for blind comparison.

The results were humbling. Every platform, including my eventual favorite, misinterpreted at least one prompt in ways that were sometimes comically off‑base. The “lonely but with a sense of wonder” prompt returned from one tool a busy, percussive track that sounded like a heist scene. Another turned “tense but warm underneath” into a generic suspense pulse with no warmth whatsoever. The best tools, however, showed a capacity for emotional subtlety that went beyond tempo‑to‑mood mappings. They seemed to understand that a sad feeling could coexist with a hopeful chord progression, and that tension didn’t have to mean darkness. It was in these more delicate emotional intersections that ToMusic AI consistently delivered results I could actually use without extensive re‑prompting.

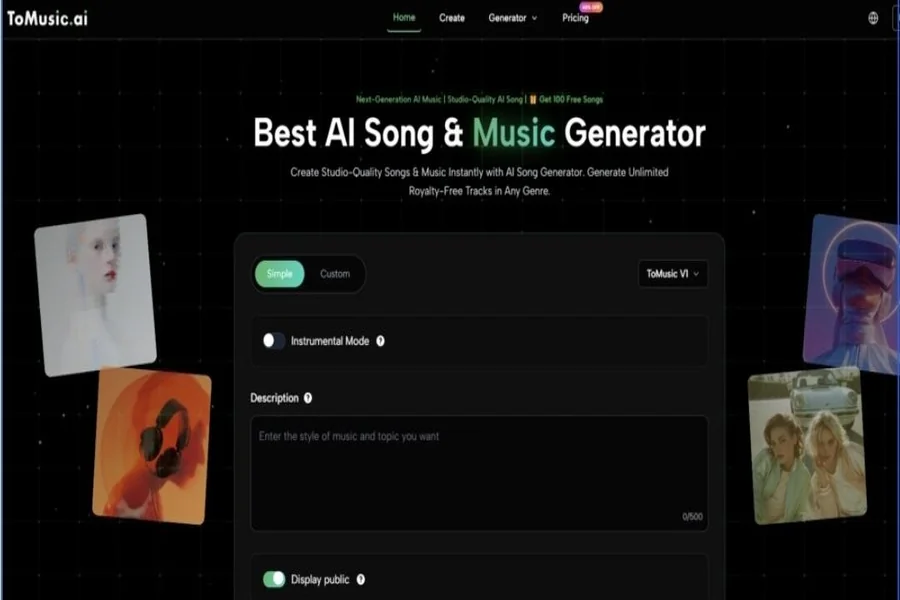

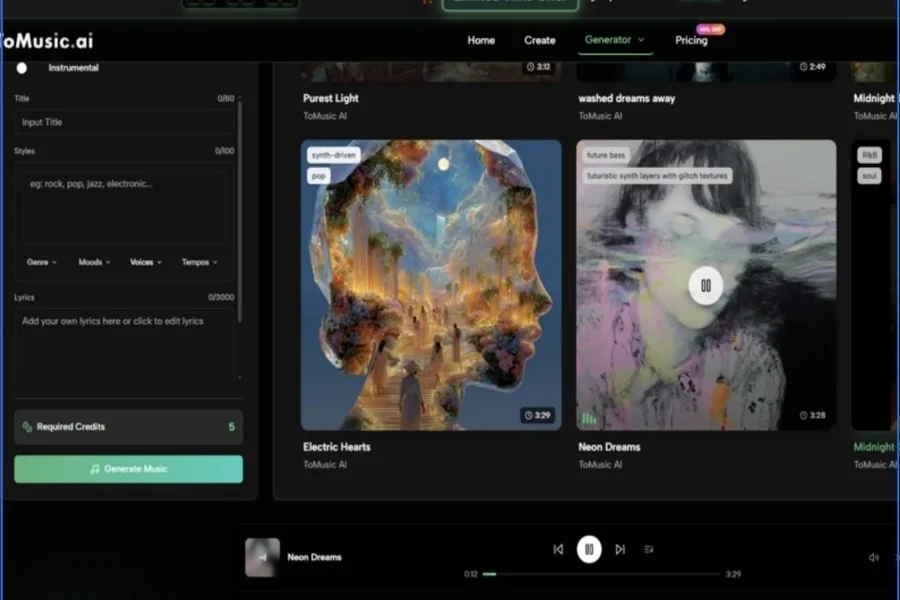

ToMusic AI’s strength in emotional accuracy wasn’t magic; it seemed to stem from how the platform handled prompts. When I described a layered emotion, the simple mode interpreted the language holistically rather than latching onto the first adjective, and the custom mode allowed me to reinforce the emotional direction through vocal style and instrumental choices. I could say “yearning vocal over warm acoustic guitar, 80 BPM, bittersweet” and get back something that didn’t tip too far into either sweetness or bitterness. The ability to switch between multiple AI music models gave me a quick way to explore different emotional shadings without rewriting the entire prompt, which was particularly useful when the first pass was close but leaned slightly too sentimental or too detached.

This emotional calibration also benefited from the clean, low‑pressure environment ToMusic provides. Because I wasn’t interrupted by ads or countdown timers, I could sit with a track and really feel whether it matched the brief. That sounds abstract, but in practice it meant I trusted my emotional judgment more, rather than rushing to accept a mediocre result just to move past an upsell screen. ToMusic as an AI Music Maker felt like a space where nuance was possible, and that feeling translated into outputs that were more likely to connect with the emotional core of my projects.

The comparison table below scores the platforms with emotional accuracy as the primary lens, though the standard five dimensions are preserved to show the overall user experience. The overall score reflects how well each tool served the specific goal of hitting a nuanced emotional brief repeatedly.

| Platform | Sound Quality | Loading Speed | Ad Distraction | Update Activity | Interface Cleanliness | Overall Score |

| ToMusic AI | 8.2 | 9.0 | 9.5 | 8.5 | 9.3 | 8.8 |

| Suno | 8.7 | 6.9 | 5.0 | 8.1 | 6.5 | 7.1 |

| Udio | 8.6 | 7.1 | 5.7 | 7.6 | 7.1 | 7.2 |

| Soundraw | 7.6 | 8.0 | 8.1 | 7.0 | 8.2 | 7.7 |

| Mubert | 7.4 | 7.7 | 7.4 | 6.4 | 7.5 | 7.3 |

| Beatoven | 7.5 | 8.3 | 7.9 | 6.7 | 8.1 | 7.7 |

Suno and Udio could produce stunningly emotional vocals in isolation, but their inconsistency across the ten prompts and the friction of their ad‑heavy interfaces made them less dependable for repeat emotional work. Soundraw and Beatoven produced pleasant instrumental moods but often lacked the vocal element that carries emotional weight in a narrative piece. ToMusic AI’s combination of sensitive prompt interpretation, model switching, and a calm interface made it the most reliable tool for hitting complex emotional targets without burning through hours of trial and error.

The Emotional Briefing Process That Worked Best

Getting emotionally accurate music from ToMusic AI followed a repeatable pattern that I refined over the test period. It required a willingness to describe feelings in plain, conversational language, and to iterate without frustration.

Step 1: Choose the custom generation path when the emotional brief is complex, because it gives you more room to shape the vocal and instrumental character. Simple mode is fine for straightforward moods but occasionally defaults to broader strokes.

Step 2: Write the prompt as if you were describing the feeling to a composer sitting next to you. I used phrases like “a gentle, hesitant hope after a long struggle” rather than single words like “hopeful,” and the results were noticeably more attuned.

Step 3: When the first generation is emotionally close but not quite right, switch to a different AI music model instead of starting over. This often shifted the emotional shading without losing the core of what made the track work, saving time and preserving creative momentum.

Step 4: Save the most resonant versions to the Music Library and, when possible, sit with them for a few minutes before deciding. Because the library is organized and searchable, I could return the next day with fresh ears and confirm whether the emotional impact still held up.

This workflow treated emotional judgment as something that benefits from space and iteration, and the platform supported that rhythm rather than rushing it.

What Blind Listeners Taught Me About Emotional AI

I asked two friends—one a documentary editor, the other a fiction writer with no music background—to listen to a selection of tracks without knowing which tool produced them and rate how well each matched the emotional brief. Their assessments often diverged from mine, but a pattern emerged: tracks from ToMusic AI and Udio received the highest “emotionally on‑brief” ratings, with ToMusic AI earning slightly higher marks for consistency across multiple prompts. The editor noted that ToMusic AI’s tracks “sat under dialogue without pulling focus,” which he attributed to a kind of emotional restraint that heavier‑handed generations lacked. The writer said the ToMusic AI outputs “left room for the listener’s own feelings,” a quality she valued in narrative work. These observations reinforced my sense that emotional accuracy in AI music isn’t just about hitting the right notes, but about knowing when not to over‑play.

When Sentiment Becomes Sentimental: The Fine Line AI Often Misses

Several tools I tested struggled with the boundary between genuine emotion and sentimentality. A prompt for “nostalgic but not saccharine” returned sweeping string arrangements that felt more like a holiday commercial than a quiet memory. ToMusic AI’s outputs, while not immune to this tendency, were easier to steer away from cliché because the model switching and prompt refinement let me push toward a more restrained tone. That steering ability matters enormously in professional storytelling, where an overly sweet track can undermine the authenticity of a scene faster than a poorly framed shot.

Where Emotional Nuance Still Requires a Human Touch

Even the most emotionally accurate AI tool will occasionally miss a brief in ways that are hard to fix through re‑prompting alone. ToMusic AI’s emotional range skews toward a polished, accessible sound, which can feel too clean for projects that need raw, lo‑fi vulnerability. If you are scoring a gritty vérité documentary or an experimental art film, you may want to blend AI‑generated stems with found sound or field recordings. The platform also does not offer per‑instrument emotional direction—you can’t tell the piano to be sadder than the strings—so some layered emotional textures will require external editing.

The storytellers who will connect most with ToMusic AI’s emotional capabilities are those who work in human‑centered media: documentary editors, brand filmmakers, nonprofit video producers, podcast creators, and educators who use music to deepen a narrative without overpowering it. The site indicates royalty‑free usage for commercial projects, which means you can place emotionally charged tracks into publicly distributed work without licensing complexity.

Emotion is a difficult metric to score on a spreadsheet, but after ten days of asking machines to feel, I came away with a surprising amount of respect for what ToMusic AI could interpret and reflect. It didn’t replace a human composer’s empathy, but it offered something almost as valuable: a fast, private, and remarkably sensitive sketchpad for emotional sound. For the kinds of stories I tell, that was more than enough.